- Blog

- Company of heroes 2 walkthrough mission 8

- Tell me why this has to end oh no lyrics

- Whatsapp web pro download for pc

- Who played flute on freedom rider by traffic

- Hill climb racing car games online

- Free Adobe Audition 2023 v23-5-0-48 for iphone instal

- Halo Recruit download the new version for mac

- For iphone instal CyberLink PowerDirector Ultimate 21-6-3007-0

- Mahjong Epic for apple download free

- City skylines traffic manager president edition lane connector

- Download pixel remaster ff6

- Download the horned rat

- Download homeworld 3 price

- Download the adobe photoshop lightroom classic cc book

- Strike for ipod download

- Free download tiny tina wonderlands ps5

- Free download steam the pathless

- Free download the settlers 7 paths to a kingdom

- Wildermyth ps5 download free

- Download mafia 2 xbox one

- Wondershare mindmaster download

- Movavi screen

- Installing davinci resolve

- Xpadder windows 10 64 bit

- Dragon center support

- Download free the final station xbox

- Download free salt and sanctuary ps vita

- Download apk hitman sniper

- Download free ark ragnarok

- Download ai passing the turing test

- Update for removal of adobe flash player for windows 10

- Acronis true image full version

- Twitter convert to mp4

- Run nessus on kali

- Photoshop torrent

- Plex download offline android

- Anonymous view instagram story

- Drive chrome extension

- Vray for mac trial

- Minecraft skins java

- Vector art illustrator

- Phpstorm download windows 10

- Rosetta stone espanol level 1

- Nbc hairspray live online

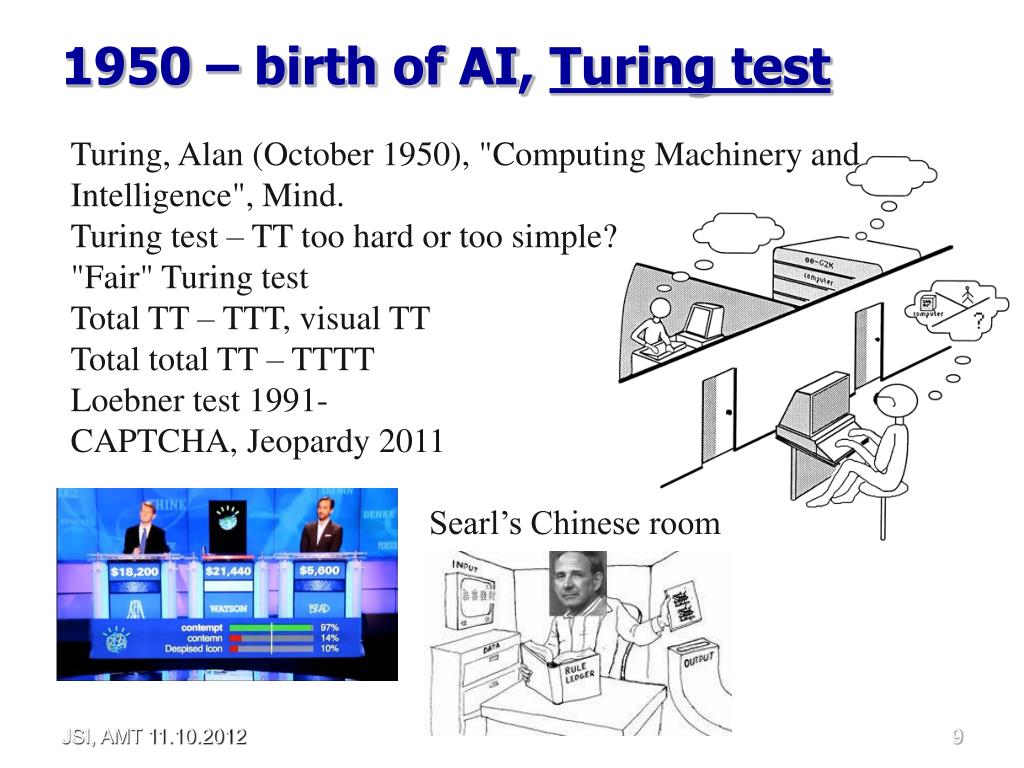

- How to insert equation in word doc

This could potentially enable us to communicate with individuals posthumously, thereby creating a digital afterlife of sorts. Taking this a step further, some people are working on uploading human minds on a computer. There could also be multiple AI versions of my personality. In fact it can be tweaked to make a better version of me, one who does not have bad days. It could become possible to create my chatbot replica, which would talk exactly like me. It's reasonable to assume that there are other tests that would be equally sufficient, but this test is elegant in its simplicity and relative lack of constraints.By 2029, we may have AI replicas of human beings which would be non-distinguishable from their real forms in conversations. if we can able to design an AI algorithm to pass this test, then we can say that we are able with AI to develop machines with human intelligence. Digital Dissection Turing Test is not meant to be a judge of the quality of an AI algorithm, but rather the success of an AI algorithm meant to simulate human intelligence. Here are seven proposed alternatives that could help us distinguish bot from a human. But that doesn't mean it cannot be improved or modified further. The Turing Test, which was first intended to detect human-like intelligence in a machine, is fundamentally flawed. I'm just curious if there are any other tests that overcome its limitations (probably trading them for other limitations). Are there any alternatives? For that matter, am I wrong as to what I perceive to be its limitations?ĮDIT : Let me be clear: I'm not suggesting that the Turing Test should be abandoned. But I see it as being fairly limited in scope. Now, I'm sure that the Turing Test still has its place in determining machine intelligence. I could tell if the device is a computer because it will probably answer me fairly quickly while a human would have to figure it out. For example, suppose I ask what 2334 * 321 is. It could be influenced by factors that don't indicate that the computer can think humanly. For example, if I'm designing an AI to control nuclear missiles, do I really want it to act human? Granted, this is an extreme example, but you get the idea. It favors acting humanly over acting rationally. What if I'm not designing an AI to converse with humans? So we learned a bit about the Turing Test in my AI class.